What a Real AI Roadmap Looks Like (Beyond Slides & Buzzwords)

10 min read

10 min read Feb 18, 2026

Feb 18, 2026

Most CEOs I talk to feel caught between two pressures: boards demanding faster AI adoption while teams signal they're not ready. That tension is real.

What Actually Works

What a Real AI Roadmap Actually Is

What Actually Makes an AI Roadmap Work

Why AI roadmaps aren't just digital strategies

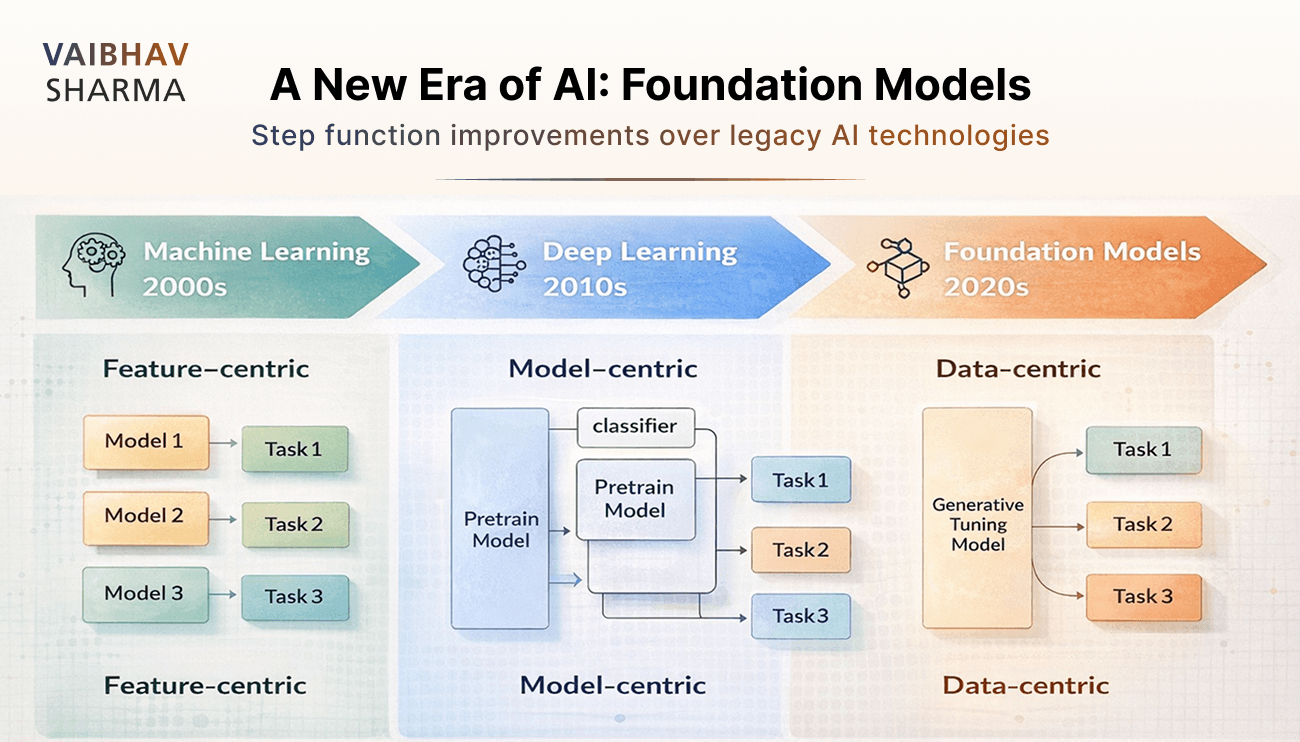

Most executives treat AI roadmaps like digital transformation projects. That's a mistake.

Digital transformation modernizes your foundation—cloud platforms, data architecture, security frameworks. It's broad by design, touching everything over multiple years. AI roadmaps are different. They're surgical. They target specific high-value problems and reshape particular workflows.

The implementation approach changes too. Digital transformation spans the enterprise. AI roadmaps create focused experiments within business units that deliver value fast.

Success metrics tell the story. Digital initiatives measure adoption rates and modernization benchmarks. AI roadmaps track direct business outcomes: Did cycle time decrease? Did accuracy improve? Did costs drop?

When I work with companies, the most successful ones align these approaches instead of choosing between them. AI identifies which processes to fix first. Digital modernization removes the friction that blocks AI from scaling.

What AI roadmaps actually accomplish

Once you move beyond pilot projects, structured roadmaps become essential. Here's what they do:

Strategic alignment: They connect AI initiatives to measurable business objectives instead of letting them float as technical experiments.

Resource optimization: By identifying high-impact, low-risk use cases first, roadmaps prevent the scattered investments I see at most companies.

Governance foundation: They establish clear accountability for data, models, and outcomes before compliance issues can derail everything.

Technical architecture: Roadmaps define the infrastructure requirements—from data pipelines to deployment mechanisms—that sustainable AI actually needs.

Without this structure, I've watched well-funded AI initiatives fragment across business units. Each group chases its own use cases without integration. The result? Expensive proof-of-concepts that never scale.

Why most AI strategies fail before they start

The biggest failures aren't technical—they're strategic. Here's what goes wrong:

Technology-first thinking: Teams start with AI capabilities and hunt for problems to solve. This produces technically impressive features that nobody actually needs.

Ambition without execution: Companies define bold strategies but delay implementation, waiting for perfect conditions that never arrive. Strategy without execution is just expensive planning.

Missing operational details: Vision documents that lack specifics on data readiness, team structure, and implementation sequence. They describe the destination but not the journey.

FOMO-driven decisions: The pressure to 'do something with AI' leads to rushed projects without clear business objectives. I've seen technically sound solutions that create zero business impact.

The companies that succeed recognize something important: execution isn't separate from strategy. It is the strategy.

How to Set Clear, Measurable AI Objectives

How to Set Clear, Measurable AI Objectives

Using SMART goals to define AI success

The SMART framework works for AI, but most executives skip the hard parts. They define specific goals but ignore whether those goals are actually attainable with their current data and infrastructure.

Here's how to apply SMART without fooling yourself:

Specific: Name exactly what changes. Not 'improve business performance'—that tells your team nothing. Instead: 'Reduce manual invoice processing time by 30%''. Your finance team knows what that means.

Measurable: Pick metrics you're already tracking. If you don't currently measure ticket resolution time, don't make that your AI success metric. Start with data you trust.

Attainable: This is where most AI projects die. Your data scientist says the model can achieve 95% accuracy. Your operations team says anything under 98% creates more work than it saves. Bridge that gap before you build anything.

Realistic: AI amplifies what you already have. If your current process is broken, AI will make it broken faster.

Time-bound: Set deadlines that account for learning time. 'Increase Net Promoter Score by 15% by Q3' assumes you know what levers to pull. Build in time to discover those levers.

Aligning AI goals with business KPIs

The fastest way to kill an AI project is to create new metrics that leadership doesn't care about. Stick to KPIs that already matter.

I organize AI impact into four categories:

Operational KPIs show whether AI actually improves workflow. Cycle time, error rates, throughput—metrics that operations teams watch daily.

Financial KPIs answer the CFO's question: 'Is this worth the investment?' Cost per transaction, ROI, working capital improvements. These matter more than technical metrics.

Customer KPIs reveal whether AI helps or hurts the customer experience. Response time, satisfaction scores, resolution rates.

Employee KPIs indicate if AI makes work better or just different. Productivity, workload distribution, retention rates. This often gets overlooked until good people start leaving.

One study found that organizations tracking AI-enabled KPIs were five times more likely to align incentives with objectives. That's because they measured business outcomes, not technical achievements.

Examples of AI business use cases with measurable outcomes

Every AI use case should answer this question: Which specific KPI improves, and by how much? If you can't answer that clearly, you're building a solution looking for a problem.

Here's what works:

Contract review: 'Cut contract turnaround time by 40 hours monthly'. Legal teams know exactly what that saves in billable hours and deal velocity.

Customer retention: 'Increase retention by 5% in six months through early churn prediction'. Sales teams can calculate the revenue impact immediately.

Supply chain: 'Reduce errors causing delays by 15%'. Operations knows what that means for customer satisfaction and costs.

Document processing: 'Process invoices 30% faster with 90% fewer errors'. Finance can quantify the FTE savings.

Notice what these examples share: they specify both the outcome and the measurement period. They also acknowledge dependencies—the marketing team reducing acquisition costs needs clean customer data and accurate tagging.

The key insight: AI objectives must start with business problems, not available technology. When you establish clear metrics before implementation, you can tell the difference between AI that saves minutes versus AI that changes how work gets done. That distinction determines whether your AI initiative scales or stays a pilot forever.

Auditing Your AI Readiness: Data, Tools, and People

Auditing Your AI Readiness: Data, Tools, and People

Data quality and availability for AI models

Your data is probably messier than you think. Poor data quality costs organizations $15 million annually, and 60% of AI failures come down to data issues. But the problem isn't just messy data—it's that most companies audit the wrong things.

They check if data exists. They should check if it's usable.

In one healthcare network, we had complete patient records going back twenty years. Perfect completeness scores. But the data represented only urban hospitals—rural patients were systematically underrepresented. The AI learned patterns that didn't work for half their market.

Here's what to audit instead:

Accuracy - Does your data reflect what actually happened, not what should have happened?

Representativeness - Does your data cover the full range of scenarios your AI will encounter in production?

Consistency - Can you trust that the same measurement means the same thing across different systems and time periods?

Timeliness - Is your data fresh enough to be relevant when decisions get made?

Most companies focus on completeness—filling in missing fields—while ignoring whether their complete data actually represents their business reality.

Infrastructure requirements for scalable AI

Traditional IT infrastructure won't cut it. AI workloads are different—they need massive compute power for training, efficient storage for datasets, and networking that can handle continuous data movement.

But here's where most infrastructure planning goes sideways: organizations build for training when they should build for production.

I've seen companies spend months optimizing training pipelines, then discover they can't serve predictions fast enough for their application. They built research infrastructure when they needed business infrastructure.

- Compute that scales - AI training consumes massive resources for weeks, but inference needs consistent, predictable performance

- Storage that performs - Object storage like S3 handles large datasets cost-effectively, but access patterns matter more than capacity

- Networking that flows - High-bandwidth connections (100+ Gbps) have become standard because AI systems move data constantly

- Orchestration that works - Kubernetes with AI extensions like Kubeflow manage complex workflows without requiring PhD-level expertise

Smart companies now use hybrid approaches: public cloud for variable training loads, private infrastructure for production inference, and edge processing for real-time decisions.

Skill gaps and team structure for AI integration

Here's the uncomfortable truth: 51% of organizations lack the skilled AI talent needed to execute their strategies. With AI spending hitting $550 billion in 2024 and a 50% talent gap, this problem isn't getting easier.

But the real issue isn't finding more data scientists. It's building teams that can actually ship AI systems that work.

Most companies hire AI builders—machine learning engineers, data scientists, data engineers. Then they wonder why their models never make it to production or solve real business problems.

You need AI translators: product managers who understand both AI capabilities and business needs, subject matter experts who can spot when models miss important patterns, designers who can make AI systems usable.

The best AI teams I've seen use pod structures: cross-functional groups with both builders and translators working on specific business domains. They organize around problems—customer engagement, supply chain optimization—not technologies.

Traditional hierarchical structures kill AI initiatives. Too many approval layers, too much time between problem identification and solution deployment.

Build for speed, not structure.

Designing a Lean AI Pilot That Proves Value Fast

Designing a Lean AI Pilot That Proves Value Fast

Choosing a high-impact, low-risk use case

Most AI pilots fail because they optimize for technical novelty instead of business impact. I've watched teams build sophisticated models that solve interesting problems nobody actually cares about.

Start with pain, not possibility.

The best first AI projects share three characteristics: they address a problem people already recognize, they have clear success metrics, and they don't require rebuilding your entire infrastructure.

Document processing consistently emerges as a smart starting point. Not because it's sexy, but because it's measurable. 'Reduce contract review time by 40%' is a goal you can track. 'Improve document workflows' isn't.

| High-Impact Pilots | Low-Impact Pilots |

|---|---|

| Address recognized pain points | Solve theoretical problems |

| Have clear, quantifiable metrics | Lack defined success criteria |

| Minimal implementation complexity | Require extensive system integration |

| Visible results to stakeholders | Hidden background processes |

Building something testable fast

Skip the 'minimum viable model' terminology. Build something that works well enough to test your assumptions within 30 days.

This means starting with existing solutions, not building from scratch. Use pre-trained models. Focus on proving the business case, not technical sophistication.

- Define the specific problem (not 'improve efficiency')

- Prepare representative data (quality matters more than quantity)

- Start with pre-trained models when possible

- Create a simple interface people will actually use

- Set clear evaluation criteria tied to business outcomes

Your data will never be perfect. That's fine. The goal is testing whether there's enough signal to justify investment, not achieving perfection.

Measuring what matters

Technical metrics like precision and recall matter, but they don't tell you if your pilot succeeded.

Precision measures how often your AI is correct when it makes a prediction. Recall measures how many relevant cases it actually catches. Both are important, but neither answers the crucial question: 'Are people actually using this?'

- Technical performance (precision, recall, F1 score)

- Business metrics tied to your original objectives

- User adoption rates over time

Most pilots that look technically successful but fail to scale have this in common: nobody uses them consistently after month three.

Not every pilot works. That's valuable information too. The goal isn't perfect success—it's learning fast enough to make better decisions about where to invest next.

Scaling AI with MLOps and Infrastructure Automation

CI/CD pipelines for machine learning

Most companies try to deploy AI models the same way they deploy software. This doesn't work.

Software CI/CD validates code. AI models need validation for code, data, model performance, and schema changes. When a model fails in production, it's rarely because the code broke—it's because the data shifted or the model drifted.

- Automated testing that checks model accuracy before deployment

- Data validation that catches schema changes before they break your system

- Rollback mechanisms when models perform worse than the previous version

The companies that get this right can update models weekly instead of waiting months. They catch problems before customers do.

Model versioning and feature stores

I see the same mistake repeatedly: teams build great models, then can't recreate them six months later.

Model versioning isn't just about storing different versions. It's about tracking exactly what data, features, and parameters created each model. When your model starts performing poorly, you need to know whether it's a data issue, feature engineering problem, or model drift.

Feature stores solve a different problem—the 'feature engineering tax.' Every team ends up rebuilding the same features: customer lifetime value, product affinity scores, seasonal adjustments.

Smart organizations build these once and share them. The savings compound quickly—instead of six teams spending weeks building customer features, they reuse what already works. Plus, consistent features mean models trained on different data can actually be compared.

Choosing between managed vs. custom MLOps stacks

The build-versus-buy decision in MLOps depends on your team size and pain tolerance:

Small teams (1-3 ML engineers): Start with open-source tools like MLflow. You'll outgrow them, but they're cheap and fast to implement.

Medium teams (4-10 engineers): This is where custom solutions start making sense. Combine MLflow for experiment tracking, Kubeflow for pipelines, and managed services for training.

The hidden cost isn't the software—it's the operational overhead. Managed solutions cost more upfront but save engineering time. Custom solutions give you control but require dedicated DevOps resources.

Most executives underestimate how much engineering effort MLOps requires. Budget accordingly.

Governance, Monitoring, and Long-Term Optimization

Setting up model monitoring for drift and bias

Your AI system needs constant health checks—different from traditional software monitoring because the problems are subtler and more dangerous.

Data drift happens when the patterns your model learned during training no longer match what it sees in production. A customer segmentation model trained pre-pandemic might struggle with new buying behaviors. A fraud detection system might miss novel attack patterns.

- Statistical tests like Chi-squared or ANOVA catch when your data distributions change significantly

- Non-parametric tests including Kolmogorov-Smirnov help with messier, real-world data patterns

- Population Stability Index tracks how much your data has shifted from baseline over time

Build dashboards that make these metrics visible to business stakeholders, not just data scientists. When your customer acquisition cost suddenly jumps 30%, you want to know if it's market conditions or model drift.

Defining roles and responsibilities for AI oversight

AI governance fails when it's treated as a technology problem instead of a business problem.

Here's who actually needs to be involved:

| Role | What They Actually Do |

|---|---|

| Chief Technology Officer | Sets technical standards and infrastructure requirements |

| Chief Information Officer | Ensures data governance and access policies work |

| Chief Risk Officer | Identifies where AI decisions could backfire |

| Legal Counsel | Keeps you compliant as regulations evolve |

| Board AI Committee | Asks the hard questions about AI impact and ethics |

Building feedback loops for continuous improvement

Real AI governance isn't about perfect models. It's about systems that get better over time.

The cycle works like this: collect performance data, analyze what's working and what isn't, generate insights about needed changes, act on those insights, then measure the results. Simple in theory, but most organizations break down in the 'acting on insights' phase.

Test your reasoning regularly against known-good examples. If your fraud detection system used to catch 95% of fraudulent transactions with 2% false positives, and now it's catching 87% with 5% false positives, something fundamental has changed.

Keep humans in the loop for high-stakes decisions. AI should make your people better at their jobs, not replace their judgment entirely.

Conclusion

FAQs

An effective AI roadmap includes clear objectives tied to business KPIs, a readiness assessment of data and infrastructure, lean pilot projects to prove value quickly, MLOps practices for scaling, and ongoing governance and monitoring systems.

Organizations should begin by mapping out existing human workflows, identifying specific pain points, and building simple automation solutions before adding more complex AI capabilities. Starting with paper-based processes or spreadsheets can provide valuable insights.

Common pitfalls include focusing on technology without clear business objectives, attempting to build general-purpose AI assistants instead of solving specific problems, and neglecting data quality and infrastructure readiness before implementation.

While accuracy metrics are important, the key measure of AI success is sustained user adoption after the initial novelty wears off. Companies should track whether people are still actively using AI tools after several months of implementation.

MLOps practices like CI/CD pipelines for machine learning, model versioning, and feature stores are crucial for moving from successful pilots to enterprise-wide AI deployment. They enable systematic management of code, models, and data as AI initiatives scale.

By Vaibhav Sharma

By Vaibhav Sharma